mirror of

https://github.com/meilisearch/meilisearch.git

synced 2024-11-30 17:14:59 +08:00

Merge #370

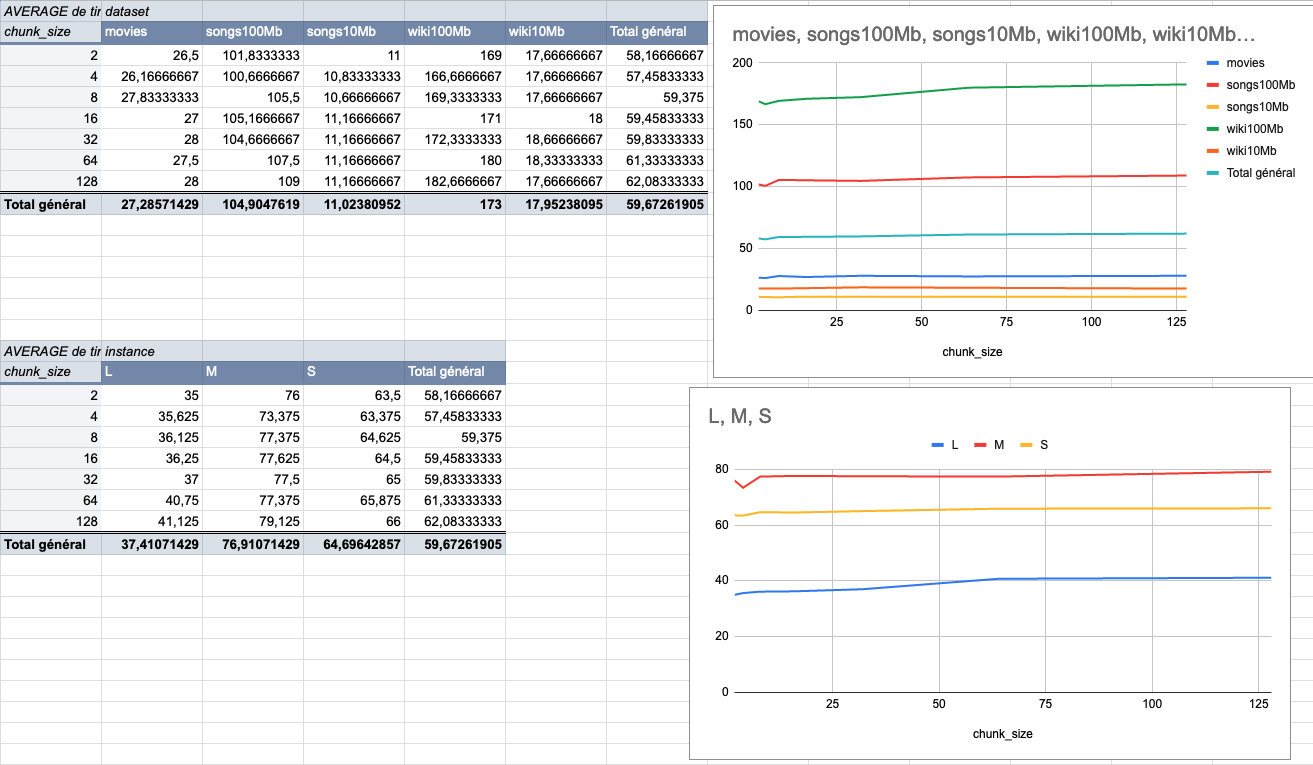

370: Change chunk size to 4MiB to fit more the end user usage r=ManyTheFish a=ManyTheFish We made several indexing tests using different sizes of datasets (5 datasets from 9MiB to 100MiB) on several typologies of VMs (`XS: 1GiB RAM, 1 VCPU`, `S: 2GiB RAM, 2 VCPU`, `M: 4GiB RAM, 3 VCPU`, `L: 8GiB RAM, 4 VCPU`). The result of these tests shows that the `4MiB` chunk size seems to be the best size compared to other chunk sizes (`2Mib`, `4MiB`, `8Mib`, `16Mib`, `32Mib`, `64Mib`, `128Mib`). below is the average time per chunk size:  <details> <summary>Detailled data</summary> <br>  </br> </details> Co-authored-by: many <maxime@meilisearch.com>

This commit is contained in:

commit

4c09f6838f

@ -248,7 +248,7 @@ impl<'t, 'u, 'i, 'a> IndexDocuments<'t, 'u, 'i, 'a> {

|

|||||||

let chunk_iter = grenad_obkv_into_chunks(

|

let chunk_iter = grenad_obkv_into_chunks(

|

||||||

documents_file,

|

documents_file,

|

||||||

params.clone(),

|

params.clone(),

|

||||||

self.documents_chunk_size.unwrap_or(1024 * 1024 * 128), // 128MiB

|

self.documents_chunk_size.unwrap_or(1024 * 1024 * 4), // 4MiB

|

||||||

);

|

);

|

||||||

|

|

||||||

let result = chunk_iter.map(|chunk_iter| {

|

let result = chunk_iter.map(|chunk_iter| {

|

||||||

|

|||||||

Loading…

Reference in New Issue

Block a user